This data may be unstructured and therefore unsuitable for use in data analytics processes. Most organizations now access and use diverse data sources, from operational, financial, and sales databases to application APIs and scraped web data. Some of the most important transformations are mapping data types from source to target systems, flattening semistructured data intended for a relational database, and data validation. Transformations fall into three general categories: validating, cleansing, and preparing data for analysis. Business requirements and the characteristics of the destination system determine what transformations are necessary. Transformation alters the structure, format, or values of the extracted data through different data transformation operations. Other potential sources include flat files such as HTML or log files. The transactional systems may run on local servers or on SaaS platforms. These online transaction processing (OLTP) systems are optimized for operational data defined by schemas and divided into tables, rows, and columns. Many enterprise data sources are transactional systems where the data is stored in relational databases that are designed for high throughput and frequent write and update operations. The extraction step focuses on collecting data.

A data engineer may extract source data to a temporary location such as a data lake or a staging table in a database in anticipation of the steps that follow. Let's take a more detailed look at each step.Įxtraction involves accessing source systems and reading and copying the data they contain. It encompasses aspects of obtaining, processing, and transporting information so an enterprise can use it in applications, reporting, or analytics. What is ETL?ĮTL (extract, transform, load) is a general process for replicating data from source systems to target systems. Maintaining a data warehouse requires building a data ingestion process, and that in turn requires an understanding of ETL, its use cases, and its relationship with other components in the data analytics stack. ETL with Saturn Cloud, a tutorial on using Saturn Cloud for scalable ETL tasks, leveraging the power of Dask and cloud-based infrastructure.Understanding ETL (extract, transform, load)īig data and cloud data warehouses are helping modern organizations leverage business intelligence (BI) and analytics for decision-making and new insights.Apache NiFi, an open-source ETL tool that provides real-time data integration and processing capabilities.Talend, a popular ETL and data integration tool that enables users to design, test, and deploy data integration workflows.

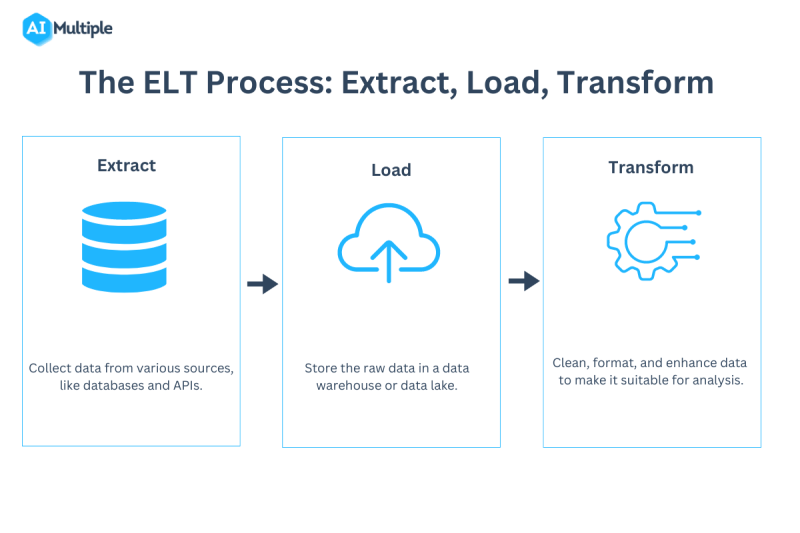

The Data Warehouse ETL Toolkit, a book on best practices for ETL design, development, and maintenance.To learn more about ETL and its applications, you can explore the following resources: Enhanced decision-making: ETL supports data-driven decision-making by providing clean, consistent, and reliable data for analysis.Efficient data processing: ETL automates and streamlines data integration, reducing manual effort and improving productivity.Improved data quality: ETL processes ensure data quality by cleaning, transforming, and validating the data before loading it into the target repository.Data consolidation: ETL enables organizations to consolidate data from multiple sources, providing a unified view for analysis and reporting.Loads data: ETL transfers the transformed data into a target data repository, such as a data warehouse or database, for storage and further analysis.ĮTL offers several benefits for data management and analytics:.Transforms data: ETL processes and cleans the data, applying transformations like data cleansing, normalization, aggregation, and encoding to ensure consistency and compatibility.Extracts data: ETL retrieves data from various sources, such as databases, APIs, files, or web services, and imports it into a staging area.ETL is a critical component of data management and business intelligence workflows, allowing organizations to consolidate and analyze data from multiple sources for reporting, analytics, and decision-making purposes.ĮTL facilitates data integration and management: ETL (Extract, Transform, Load) is a data integration process that involves extracting data from various sources, transforming it into a structured and usable format, and loading it into a target data repository, such as a data warehouse, database, or data lake.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed